要获得与亚马逊 Timestream 类似的功能 LiveAnalytics,可以考虑适用于 InfluxDB 的亚马逊 Timestream。它为实时分析提供了简化的数据摄取和个位数毫秒的查询响应时间。点击此处了解更多。

本文属于机器翻译版本。若本译文内容与英语原文存在差异,则一律以英文原文为准。

UNLOAD

Timestream for LiveAnalytics 支持将UNLOAD命令作为其 SQL 支持的扩展。中描述了UNLOAD支持的数据类型支持的数据类型。time和unknown类型不适用于UNLOAD。

UNLOAD (SELECT statement) TO 's3://bucket-name/folder' WITH ( option = expression [, ...] )

选项在哪里

{ partitioned_by = ARRAY[ col_name[,…] ] | format = [ '{ CSV | PARQUET }' ] | compression = [ '{ GZIP | NONE }' ] | encryption = [ '{ SSE_KMS | SSE_S3 }' ] | kms_key = '<string>' | field_delimiter ='<character>' | escaped_by = '<character>' | include_header = ['{true, false}'] | max_file_size = '<value>' }

- SELECT 语句

-

用于从一个或多个 Timestream 中为 LiveAnalytics 表选择和检索数据的查询语句。

(SELECT column 1, column 2, column 3 from database.table where measure_name = "ABC" and timestamp between ago (1d) and now() ) - 收件人条款

-

TO 's3://bucket-name/folder'或

TO 's3://access-point-alias/folder'语

TO句中的子UNLOAD句指定查询结果输出的目的地。您需要提供完整路径,包括 Amazon S3 存储桶名称或 Amazon S3,以及在 Amazon S3 access-point-alias 上 LiveAnalytics写入输出文件对象的 Timestream 上的文件夹位置。S3 存储桶应归同一账户所有,且位于同一区域。除了查询结果集之外,Timestream 还会将清单和元数据文件 LiveAnalytics 写入指定的目标文件夹。 - PARTITIONED_BY 子句

-

partitioned_by = ARRAY [col_name[,…] , (default: none)查询中使用该

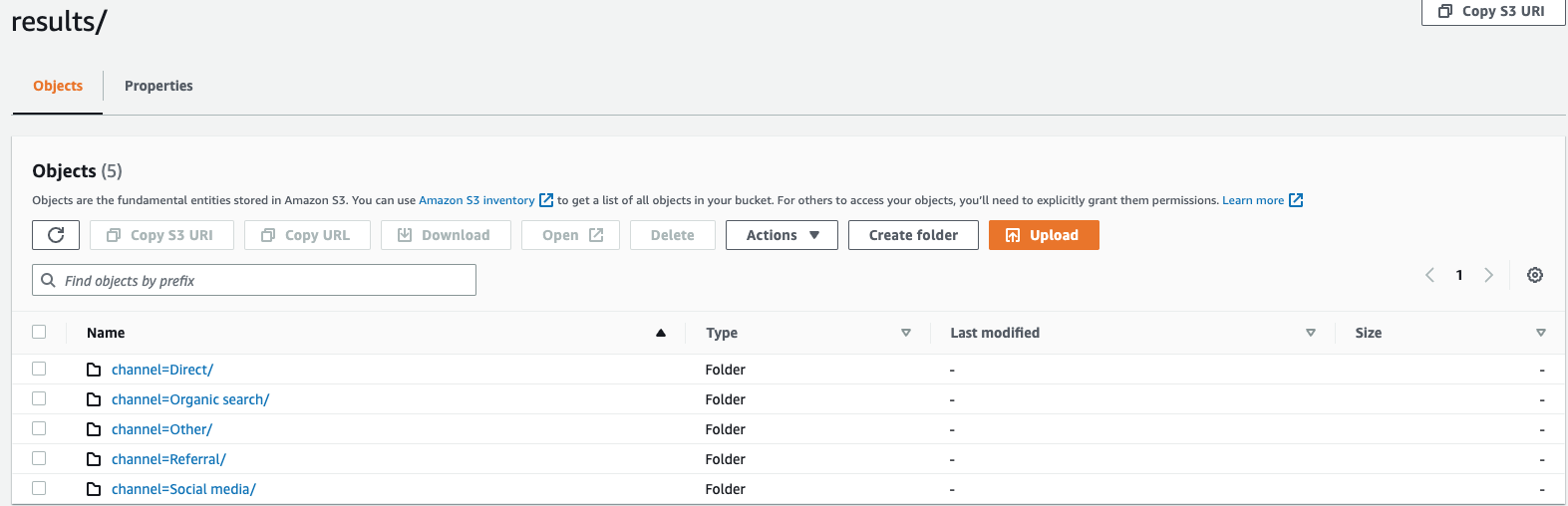

partitioned_by子句对数据进行精细分组和分析。将查询结果导出到 S3 存储桶时,您可以选择根据选择查询中的一列或多列对数据进行分区。对数据进行分区时,根据分区列将导出的数据分成子集,每个子集存储在单独的文件夹中。在包含导出数据的结果文件夹中,将自动创建一个folder/results/partition column = partition value/子文件夹。但是,请注意,分区列不包含在输出文件中。partitioned_by不是语法中的必备子句。如果您选择在不进行任何分区的情况下导出数据,则可以在语法中排除该子句。假设您正在监控网站的点击流数据,并且有 5 个流量渠道

direct,即Social Media、Organic SearchOther、和Referral。导出数据时,您可以选择使用列对数据进行分区Channel。在您的数据文件夹中s3://bucketname/results,您将有五个文件夹,每个文件夹都有各自的频道名称,例如,s3://bucketname/results/channel=Social Media/.在此文件夹中,您将找到通过该Social Media渠道登陆您网站的所有客户的数据。同样,剩下的频道也将有其他文件夹。按频道列分区的导出数据

- FORMAT

-

format = [ '{ CSV | PARQUET }' , default: CSV用于指定写入您的 S3 存储桶的查询结果格式的关键字。您可以使用逗号 (,) 作为默认分隔符将数据导出为逗号分隔值 (CSV),也可以以 Apache Parquet 格式(一种用于分析的高效开放列式存储格式)导出。

- 压缩

-

compression = [ '{ GZIP | NONE }' ], default: GZIP您可以使用压缩算法 GZIP 压缩导出的数据,也可以通过指定选项将其解压缩。

NONE - ENCRYPTION

-

encryption = [ '{ SSE_KMS | SSE_S3 }' ], default: SSE_S3Amazon S3 上的输出文件使用您选择的加密选项进行加密。除了您的数据外,还会根据您选择的加密选项对清单文件和元数据文件进行加密。我们目前支持 SSE_S3 和 SSE_KMS 加密。SSE_S3 是一种服务器端加密,Amazon S3 使用 256 位高级加密标准 (AES) 加密对数据进行加密。SSE_KMS 是一种服务器端加密,用于使用客户管理的密钥对数据进行加密。

- KMS_KEY

-

kms_key = '<string>'KMS 密钥是客户定义的密钥,用于加密导出的查询结果。KMS 密钥由 AWS 密钥管理服务 (AWS KMS) 安全管理,用于加密 Amazon S3 上的数据文件。

- 字段分隔符

-

field_delimiter ='<character>' , default: (,)以 CSV 格式导出数据时,此字段指定一个 ASCII 字符用于分隔输出文件中的字段,例如竖线字符 (|)、逗号 (,) 或制表符 (/t)。CSV 文件的默认分隔符是逗号字符。如果数据中的值包含选定的分隔符,则分隔符将用引号字符引用。例如,如果您的数据中的值包含

Time,stream,则该值将像在导出数据"Time,stream"中一样被引用。Timestream 使用的引号字符 LiveAnalytics 是双引号 (“)。FIELD_DELIMITER如果要在 CSV 中包含标题,请避免将回车符(ASCII 130D、十六进制、文本 '\ r')或换行符(ASCII 10、十六进制 0A、文本'\ n ')指定为,因为这将使许多解析器无法在生成的 CSV 输出中正确解析标题。 - 逃脱了_BY

-

escaped_by = '<character>', default: (\)以 CSV 格式导出数据时,此字段指定写入 S3 存储桶的数据文件中应被视为转义字符的字符。逃跑发生在以下场景中:

-

如果值本身包含引号字符 (“),则将使用转义字符对其进行转义。例如,如果值为

Time"stream,其中 (\) 是配置的转义字符,则将其转义为Time\"stream。 -

如果该值包含配置的转义字符,则会对其进行转义。例如,如果值为

Time\stream,则将其转义为Time\\stream。

注意

如果导出的输出包含诸如数组、行或时间序列之类的复杂数据类型,则会将其序列化为 JSON 字符串。以下为示例。

数据类型 实际价值 如何以 CSV 格式对值进行转义 [序列化的 JSON 字符串] 数组

[ 23,24,25 ]"[23,24,25]"行

( x=23.0, y=hello )"{\"x\":23.0,\"y\":\"hello\"}"时间序列

[ ( time=1970-01-01 00:00:00.000000010, value=100.0 ),( time=1970-01-01 00:00:00.000000012, value=120.0 ) ]"[{\"time\":\"1970-01-01 00:00:00.000000010Z\",\"value\":100.0},{\"time\":\"1970-01-01 00:00:00.000000012Z\",\"value\":120.0}]" -

- 包含标题

-

include_header = 'true' , default: 'false'以 CSV 格式导出数据时,此字段允许您将列名作为导出的 CSV 数据文件的第一行。

可接受的值为 “真” 和 “假”,默认值为 “假”。诸如

escaped_by和之类的文本转换选项也field_delimiter适用于标题。注意

包括标题时,请务必不要选择回车符(ASCII 13、十六进制 0D、文本 '\ r')或换行符(ASCII 10、十六进制 0A、文本'\ n ')作为标题

FIELD_DELIMITER,因为这将使许多解析器无法在生成的 CSV 输出中正确解析标题。 - 最大文件大小

-

max_file_size = 'X[MB|GB]' , default: '78GB'此字段指定该

UNLOAD语句在 Amazon S3 中创建的最大文件大小。该UNLOAD语句可以创建多个文件,但是写入 Amazon S3 的每个文件的最大大小将接近该字段中指定的大小。该字段的值必须介于 16 MB 到 78 GB 之间(含)。你可以用整数(例如)或小数(如

0.5GB或24.7MB)来指定。12GB默认值为 78 GB。实际文件大小是写入文件时的近似值,因此实际最大大小可能不完全等于您指定的数字。