Updating a trail with the CloudTrail console

This section describes how to change trail settings.

To convert a single-Region trail to a multi-Region trail, or update a multi-Region trail to log events in only a single Region, you must use the AWS CLI. For more information about how to convert a single-Region trail to a multi-Region trail, see Converting a single-Region trail to a multi-Region trail. For more information about how to update a multi-Region trail to log events in a single Region, see Converting a multi-Region trail to a single-Region trail.

If you've enabled CloudTrail management events in Amazon Security Lake, you are required to maintain at least one organizational trail that is multi-Region and logs both read and write management events. You cannot update a

qualifying trail in such a way that it fails to meet the Security Lake requirement. For example, by changing the trail to single-Region, or by turning off the logging of read or write management events.

Note

CloudTrail updates organization trails in member accounts even if a resource validation fails. Examples of validation failures include:

-

an incorrect Amazon S3 bucket policy

-

an incorrect Amazon SNS topic policy

-

inability to deliver to a CloudWatch Logs log group

-

insufficient permission to encrypt using a KMS key

A member account with CloudTrail permissions can see any validation failures for an organization trail by viewing the trail's details page on the CloudTrail console, or by running the AWS CLI get-trail-status command.

To update a trail with the AWS Management Console

Sign in to the AWS Management Console and open the CloudTrail console at https://console.aws.amazon.com/cloudtrail/

. -

In the navigation pane, choose Trails, and then choose a trail name.

-

In General details, choose Edit to change the following settings. You cannot change the name of a trail.

-

Apply trail to my organization - Change whether this trail is an AWS Organizations organization trail.

Note

Only the management account for the organization can convert an organization trail to a non-organization trail, or convert a non-organization trail to an organization trail.

-

Trail log location - Change the name of the S3 bucket or prefix in which you are storing logs for this trail.

-

Log file SSE-KMS encryption - Choose to enable or disable encrypting log files with SSE-KMS instead of SSE-S3.

-

Log file validation - Choose to enable or disable validation of the integrity of log files.

-

SNS notification delivery - Choose to enable or disable Amazon Simple Notification Service (Amazon SNS) notifications that log files have been delivered to the bucket specified for the trail.

-

To change the trail to an AWS Organizations organization trail, you can choose to enable the trail for all accounts in your organization. For more information, see Creating a trail for an organization.

-

To change the specified bucket in Storage location, choose Create new S3 bucket to create a bucket. When you create a bucket, CloudTrail creates and applies the required bucket policies. If you choose to create a new S3 bucket, your IAM policy needs to include permission for the

s3:PutEncryptionConfigurationaction because by default server-side encryption is enabled for the bucket.Note

If you chose Use existing S3 bucket, specify a bucket in Trail log bucket name, or choose Browse to choose a bucket. The bucket policy must grant CloudTrail permission to write to it. For information about manually editing the bucket policy, see Amazon S3 bucket policy for CloudTrail.

To make it easier to find your logs, create a new folder (also known as a prefix) in an existing bucket to store your CloudTrail logs. Enter the prefix in Prefix.

-

For Log file SSE-KMS encryption, choose Enabled if you want to encrypt your log files and digest files using SSE-KMS encryption instead of SSE-S3 encryption. The default is Enabled. If you don't enable SSE-KMS encryption, your log files and digest files are encrypted using SSE-S3 encryption. For more information about SSE-KMS encryption, see Using server-side encryption with AWS Key Management Service (SSE-KMS). For more information about SSE-S3 encryption, see Using Server-Side Encryption with Amazon S3-Managed Encryption Keys (SSE-S3).

If you enable SSE-KMS encryption, choose a New or Existing AWS KMS key. In AWS KMS Alias, specify an alias, in the format

alias/MyAliasName. For more information, see Updating a resource to use your KMS key with the console. CloudTrail also supports AWS KMS multi-Region keys. For more information about multi-Region keys, see Using multi-Region keys in the AWS Key Management Service Developer Guide.Note

You can also type the ARN of a key from another account. For more information, see Updating a resource to use your KMS key with the console. The key policy must allow CloudTrail to use the key to encrypt your log files and digest files, and allow the users you specify to read log files or digest files in unencrypted form. For information about manually editing the key policy, see Configure AWS KMS key policies for CloudTrail.

-

For Log file validation, choose Enabled to have log digests delivered to your S3 bucket. You can use the digest files to verify that your log files did not change after CloudTrail delivered them. For more information, see Validating CloudTrail log file integrity.

-

For SNS notification delivery, choose Enabled to be notified each time a log is delivered to your bucket. CloudTrail stores multiple events in a log file. SNS notifications are sent for every log file, not for every event. For more information, see Configuring Amazon SNS notifications for CloudTrail.

If you enable SNS notifications, for Create a new SNS topic, choose New to create a topic, or choose Existing to use an existing topic. If you are creating multi-Region trail, SNS notifications for log file deliveries from all enabled Regions are sent to the single SNS topic that you create.

If you choose New, CloudTrail specifies a name for the new topic for you, or you can type a name. If you choose Existing, choose an SNS topic from the drop-down list. You can also enter the ARN of a topic from another Region or from an account with appropriate permissions. For more information, see Amazon SNS topic policy for CloudTrail.

If you create a topic, you must subscribe to the topic to be notified of log file delivery. You can subscribe from the Amazon SNS console. Due to the frequency of notifications, we recommend that you configure the subscription to use an Amazon SQS queue to handle notifications programmatically. For more information, see Getting started with Amazon SNS in the Amazon Simple Notification Service Developer Guide.

-

-

In CloudWatch Logs, choose Edit to change settings for sending CloudTrail log files to CloudWatch Logs. Choose Enabled in CloudWatch Logs to enable sending log files. For more information, see Sending events to CloudWatch Logs.

-

If you enable integration with CloudWatch Logs, choose New to create a new log group, or Existing to use an existing one. If you choose New, CloudTrail specifies a name for the new log group for you, or you can type a name.

-

If you choose Existing, choose a log group from the drop-down list.

-

Choose New to create a new IAM role for permissions to send logs to CloudWatch Logs. Choose Existing to choose an existing IAM role from the drop-down list. The policy statement for the new or existing role is displayed when you expand Policy document. For more information about this role, see Role policy document for CloudTrail to use CloudWatch Logs for monitoring.

Note

-

When you configure a trail, you can choose an S3 bucket and SNS topic that belong to another account. However, if you want CloudTrail to deliver events to a CloudWatch Logs log group, you must choose a log group that exists in your current account.

-

Only the management account can configure a CloudWatch Logs log group for an organization trail using the console. The delegated administrator can configure a CloudWatch Logs log group using the AWS CLI or CloudTrail

CreateTrailorUpdateTrailAPI operations.

-

-

-

In Tags, choose Edit to change, add, or delete tags on the trail. You can add up to 50 tag key pairs to help you identify, sort, and control access to your trail. Tags can help you identify both your CloudTrail trails and the Amazon S3 buckets that contain CloudTrail log files. You can then use resource groups for your CloudTrail resources. For more information, see AWS Resource Groups and Tags.

-

In Management events, choose Edit to change management event logging settings.

-

For API activity, choose if you want your trail to log Read events, Write events, or both. For more information, see Management events.

-

Choose Exclude AWS KMS events to filter AWS Key Management Service (AWS KMS) events out of your trail. The default setting is to include all AWS KMS events.

The option to log or exclude AWS KMS events is available only if you log management events on your trail. If you choose not to log management events, AWS KMS events are not logged, and you cannot change AWS KMS event logging settings.

AWS KMS actions such as

Encrypt,Decrypt, andGenerateDataKeytypically generate a large volume (more than 99%) of events. These actions are now logged as Read events. Low-volume, relevant AWS KMS actions such asDisable,Delete, andScheduleKey(which typically account for less than 0.5% of AWS KMS event volume) are logged as Write events.To exclude high-volume events like

Encrypt,Decrypt, andGenerateDataKey, but still log relevant events such asDisable,DeleteandScheduleKey, choose to log Write management events, and clear the check box for Exclude AWS KMS events. -

Choose Exclude Amazon RDS Data API events to filter Amazon Relational Database Service Data API events out of your trail. The default setting is to include all Amazon RDS Data API events. For more information about Amazon RDS Data API events, see Logging Data API calls with AWS CloudTrail in the Amazon RDS User Guide for Aurora.

-

-

Important

Steps 7-11 are for configuring data events using advanced event selectors, which is the default. Advanced event selectors let you configure more data event types and offer fine-grained control over which data events your trail captures. If you plan to log network activity events, you must use advanced event selectors. If you are using basic event selectors, see Updating data event settings with basic event selectors, then return to step 12 of this procedure.

In Data events, choose Edit to change data event logging settings. By default, trails don't log data events. Additional charges apply for logging data events. For CloudTrail pricing, see AWS CloudTrail Pricing

. For Resource type, choose the resource type on which you want to log data events. For more information about available resource types, see Data events.

-

Choose a log selector template. You can choose a predefined template, or choose Custom to define your own event collection conditions.

You can choose from the following predefined templates:

-

Log all events – Choose this template to log all events.

-

Log only read events – Choose this template to log only read events. Read-only events are events that do not change the state of a resource, such as

Get*orDescribe*events. -

Log only write events – Choose this template to log only write events. Write events add, change, or delete resources, attributes, or artifacts, such as

Put*,Delete*, orWrite*events. -

Log only AWS Management Console events – Choose this template to log only events originating from the AWS Management Console.

-

Exclude AWS service initiated events – Choose this template to exclude AWS service events, which have an

eventTypeofAwsServiceEvent, and events initiated with AWS service-linked roles (SLRs).

Note

Choosing a predefined template for S3 buckets enables data event logging for all buckets currently in your AWS account and any buckets you create after you finish creating the trail. It also enables logging of data event activity performed by any user or role in your AWS account, even if that activity is performed on a bucket that belongs to another AWS account.

If the trail applies only to one Region, choosing a predefined template that logs all S3 buckets enables data event logging for all buckets in the same Region as your trail and any buckets you create later in that Region. It will not log data events for Amazon S3 buckets in other Regions in your AWS account.

If you're creating a multi-Region trail, choosing a predefined template for Lambda functions enables data event logging for all functions currently in your AWS account, and any Lambda functions you might create in any Region after you finish creating the trail. If you are creating a trail for a single Region (done by using the AWS CLI), this selection enables data event logging for all functions currently in that Region in your AWS account, and any Lambda functions you might create in that Region after you finish creating the trail. It does not enable data event logging for Lambda functions created in other Regions.

Logging data events for all functions also enables logging of data event activity performed by any user or role in your AWS account, even if that activity is performed on a function that belongs to another AWS account.

-

-

(Optional) In Selector name, enter a name to identify your selector. The selector name is a descriptive name for an advanced event selector, such as "Log data events for only two S3 buckets". The selector name is listed as

Namein the advanced event selector and is viewable if you expand the JSON view. -

If you selected Custom, in Advanced event selectors build an expression based on the values of advanced event selector fields.

Note

Selectors don't support the use of wildcards like

*. To match multiple values with a single condition, you may useStartsWith,EndsWith,NotStartsWith, orNotEndsWithto explicitly match the beginning or end of the event field.-

Choose from the following fields.

-

readOnly-readOnlycan be set to equals a value oftrueorfalse. Read-only data events are events that do not change the state of a resource, such asGet*orDescribe*events. Write events add, change, or delete resources, attributes, or artifacts, such asPut*,Delete*, orWrite*events. To log bothreadandwriteevents, don't add areadOnlyselector. -

eventName-eventNamecan use any operator. You can use it to include or exclude any data event logged to CloudTrail, such asPutBucket,GetItem, orGetSnapshotBlock. -

eventSource– The event source to include or exclude. This field can use any operator. -

eventType – The event type to include or exclude. For example, you can set this field to not equals

AwsServiceEventto exclude AWS service events. For a list of event types, see eventType in CloudTrail record contents for management, data, and network activity events. -

sessionCredentialFromConsole – Include or exclude events originating from an AWS Management Console session. This field can be set to equals or not equals with a value of

true. -

userIdentity.arn – Include or exclude events for actions taken by specific IAM identities. For more information, see CloudTrail userIdentity element.

-

resources.ARN- You can use any operator withresources.ARN, but if you use equals or does not equal, the value must exactly match the ARN of a valid resource of the type you've specified in the template as the value ofresources.type.Note

You can't use the

resources.ARNfield to filter resource types that do not have ARNs.For more information about the ARN formats of data event resources, see Actions, resources, and condition keys for AWS services in the Service Authorization Reference.

-

-

For each field, choose + Condition to add as many conditions as you need, up to a maximum of 500 specified values for all conditions. For example, to exclude data events for two S3 buckets from data events that are logged on your event data store, you can set the field to resources.ARN, set the operator for does not start with, and then paste in an S3 bucket ARN for which you do not want to log events.

To add the second S3 bucket, choose + Condition, and then repeat the preceding instruction, pasting in the ARN for or browsing for a different bucket.

For information about how CloudTrail evaluates multiple conditions, see How CloudTrail evaluates multiple conditions for a field.

Note

You can have a maximum of 500 values for all selectors on an event data store. This includes arrays of multiple values for a selector such as

eventName. If you have single values for all selectors, you can have a maximum of 500 conditions added to a selector. -

Choose + Field to add additional fields as required. To avoid errors, do not set conflicting or duplicate values for fields. For example, do not specify an ARN in one selector to be equal to a value, then specify that the ARN not equal the same value in another selector.

-

-

To add another resource type on which to log data events, choose Add data event type. Repeat steps 3 through this step to configure advanced event selectors for the resource type.

-

In Network activity events, choose Edit to change network activity event logging settings. By default, trails don't log network activity events. Additional charges apply for logging network activity events. For more information, see AWS CloudTrail Pricing

. To log network activity events, do the following:

-

From Network activity event source, choose the source for network activity events.

-

In Log selector template, choose a template. You can choose to log all network activity events, log all network activity access denied events, or choose Custom to build a custom log selector to filter on multiple fields, such as

eventNameandvpcEndpointId. -

(Optional) Enter a name to identify the selector. The selector name is listed as Name in the advanced event selector and is viewable if you expand the JSON view.

-

In Advanced event selectors build expressions by choosing values for Field, Operator, and Value. You can skip this step if you are using a predefined log template.

-

For excluding or including network activity events, you can choose from the following fields in the console.

-

eventName– You can use any operator witheventName. You can use it to include or exclude any event, such asCreateKey. -

errorCode– You can use it to filter on an error code. Currently, the only supportederrorCodeisVpceAccessDenied. -

vpcEndpointId– Identifies the VPC endpoint that the operation passed through. You can use any operator withvpcEndpointId.

-

-

For each field, choose + Condition to add as many conditions as you need, up to a maximum of 500 specified values for all conditions.

-

Choose + Field to add additional fields as required. To avoid errors, do not set conflicting or duplicate values for fields.

-

-

To add another event source for which you want to log network activity events, choose Add network activity event selector.

-

Optionally, expand JSON view to see your advanced event selectors as a JSON block.

-

-

In Insights events, choose Edit if you want your trail to log CloudTrail Insights events.

In Event type, select Insights events.

In Insights events, choose API call rate, API error rate, or both. You must be logging Write management events to log Insights events for API call rate. You must be logging Read or Write management events to log Insights events for API error rate.

CloudTrail Insights analyzes management events for unusual activity, and logs events when anomalies are detected. By default, trails don't log Insights events. For more information about Insights events, see Working with CloudTrail Insights. Additional charges apply for logging Insights events. For CloudTrail pricing, see AWS CloudTrail Pricing

. Insights events are delivered to a different folder named

/CloudTrail-Insightof the same S3 bucket that is specified in the Storage location area of the trail details page. CloudTrail creates the new prefix for you. For example, if your current destination S3 bucket is namedamzn-s3-demo-bucket/AWSLogs/CloudTrail/, the S3 bucket name with a new prefix is namedamzn-s3-demo-bucket/AWSLogs/CloudTrail-Insight/. -

When you are finished changing settings on your trail, choose Update trail.

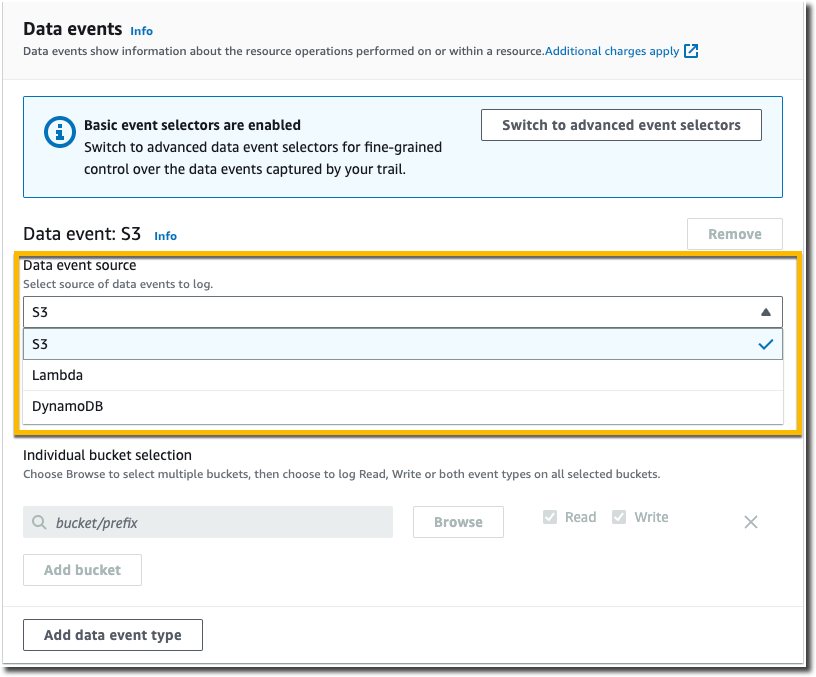

Updating data event settings with basic event selectors

You can use advanced event selectors to configure all data event types as well as network activity events. Advanced event selectors allow you to create fine-grained selectors to log only those events of interest.

If you use basic event selectors to log data events, you're limited

to logging data events for Amazon S3 buckets, AWS Lambda functions, and Amazon DynamoDB tables.

You can't filter on the eventName field using basic event

selectors. You also can't log network activity events.

Use the following procedure to configure data event settings using basic event selectors.

-

In Data events, choose Edit to change data event logging settings. With basic event selectors, you can specify logging data events for Amazon S3 buckets, AWS Lambda functions, DynamoDBtables, or a combination of those resources. Additional data event resource types are supported with advanced event selectors. By default, trails don't log data events. Additional charges apply for logging data events. For more information, see Data events. For CloudTrail pricing, see AWS CloudTrail Pricing

. For Amazon S3 buckets:

-

For Data event source, choose S3.

-

You can choose to log All current and future S3 buckets, or you can specify individual buckets or functions. By default, data events are logged for all current and future S3 buckets.

Note

Keeping the default All current and future S3 buckets option enables data event logging for all buckets currently in your AWS account and any buckets you create after you finish creating the trail. It also enables logging of data event activity performed by any user or role in your AWS account, even if that activity is performed on a bucket that belongs to another AWS account.

If the trail applies only to one Region, choosing All current and future S3 buckets enables data event logging for all buckets in the same Region as your trail and any buckets you create later in that Region. It will not log data events for Amazon S3 buckets in other Regions in your AWS account.

-

If you leave the default, All current and future S3 buckets, choose to log Read events, Write events, or both.

-

To select individual buckets, empty the Read and Write check boxes for All current and future S3 buckets. In Individual bucket selection, browse for a bucket on which to log data events. To find specific buckets, type a bucket prefix for the bucket you want. You can select multiple buckets in this window. Choose Add bucket to log data events for more buckets. Choose to log Read events, such as

GetObject, Write events, such asPutObject, or both.This setting takes precedence over individual settings you configure for individual buckets. For example, if you specify logging Read events for all S3 buckets, and then choose to add a specific bucket for data event logging, Read is already selected for the bucket you added. You cannot clear the selection. You can only configure the option for Write.

To remove a bucket from logging, choose X.

-

-

To add another resource type on which to log data events, choose Add data event type.

-

For Lambda functions:

-

For Data event source, choose Lambda.

-

In Lambda function, choose All regions to log all Lambda functions, or Input function as ARN to log data events on a specific function.

To log data events for all Lambda functions in your AWS account, select Log all current and future functions. This setting takes precedence over individual settings you configure for individual functions. All functions are logged, even if all functions are not displayed.

Note

If you're creating a multi-Region trail, this selection enables data event logging for all functions currently in your AWS account, and any Lambda functions you might create in any Region after you finish creating the trail. If you are creating a trail for a single Region (done by using the AWS CLI), this selection enables data event logging for all functions currently in that Region in your AWS account, and any Lambda functions you might create in that Region after you finish creating the trail. It does not enable data event logging for Lambda functions created in other Regions.

Logging data events for all functions also enables logging of data event activity performed by any user or role in your AWS account, even if that activity is performed on a function that belongs to another AWS account.

-

If you choose Input function as ARN, enter the ARN of a Lambda function.

Note

If you have more than 15,000 Lambda functions in your account, you cannot view or select all functions in the CloudTrail console when creating a trail. You can still select the option to log all functions, even if they are not displayed. If you want to log data events for specific functions, you can manually add a function if you know its ARN. You can also finish creating the trail in the console, and then use the AWS CLI and the put-event-selectors command to configure data event logging for specific Lambda functions. For more information, see Managing trails with the AWS CLI.

-

-

To add another resource type on which to log data events, choose Add data event type.

-

For DynamoDB tables:

-

For Data event source, choose DynamoDB.

-

In DynamoDB table selection, choose Browse to select a table, or paste in the ARN of a DynamoDB table to which you have access. A DynamoDB table ARN is in the following format:

arn:partition:dynamodb:region:account_ID:table/table_nameTo add another table, choose Add row, and browse for a table or paste in the ARN of a table to which you have access.

-

-

To configure Insights events and other settings for your trail, go back to the preceding procedure in this topic, Updating a trail with the CloudTrail console.